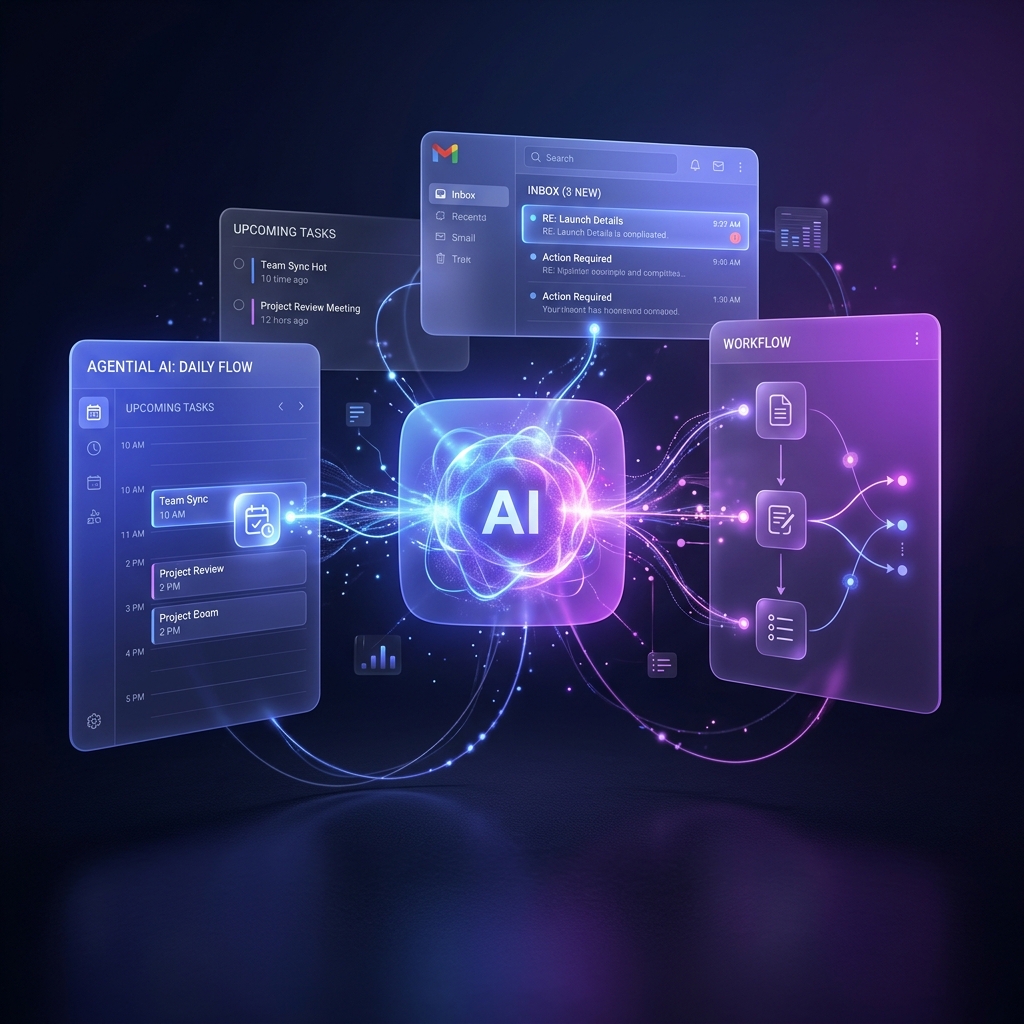

Artificial Intelligence has shifted from a reactive chatbot to a proactive assistant. But what exactly is “Agentic AI”, and how can it change your daily workflow?

Moving Beyond the Chat Box

For the past year, we’ve interacted with AI mostly through a chat interface. You type a prompt, it responds, and then it waits for your next instruction. This is helpful, but it still requires continuous human direction.

Agentic AI represents the next leap. Instead of just answering questions, an agent can take an objective, break it down into steps, and execute those steps across multiple tools—all with minimal supervision.

> Key takeaway: A chatbot talks to you. An AI agent does work for you.

Everyday Scenarios

Here is how agentic AI is starting to appear in our everyday tools:

- Email Triage and Drafting: An agent can read incoming emails, categorize them by urgency, and draft replies based on your past correspondence style. You simply review and hit “send.”

- Calendar Tetris: Instead of asking “when are you free?”, an agent can negotiate with another person’s agent to find a mutually agreeable time, book the meeting, and send out the invites.

- Research Synthesis:* When you need to understand a new topic, an agent can search the web, read multiple articles, synthesize the core arguments, and present you with a formatted summary document.

The Human in the Loop

The rise of agentic AI does not mean handing over the keys completely. The most effective systems operate with a “human in the loop” methodology. The agent does the heavy lifting, but the human sets the boundaries, provides course correction, and gives final approval for critical actions.

As these tools become more integrated into our operating systems and daily apps, the skill of managing an AI agent will become just as important as knowing how to prompt a chatbot.

🚀 Ready to Bring AI Into Your Organisation?

Learn how to safely deploy Agentic AI tools within your team’s workflow.